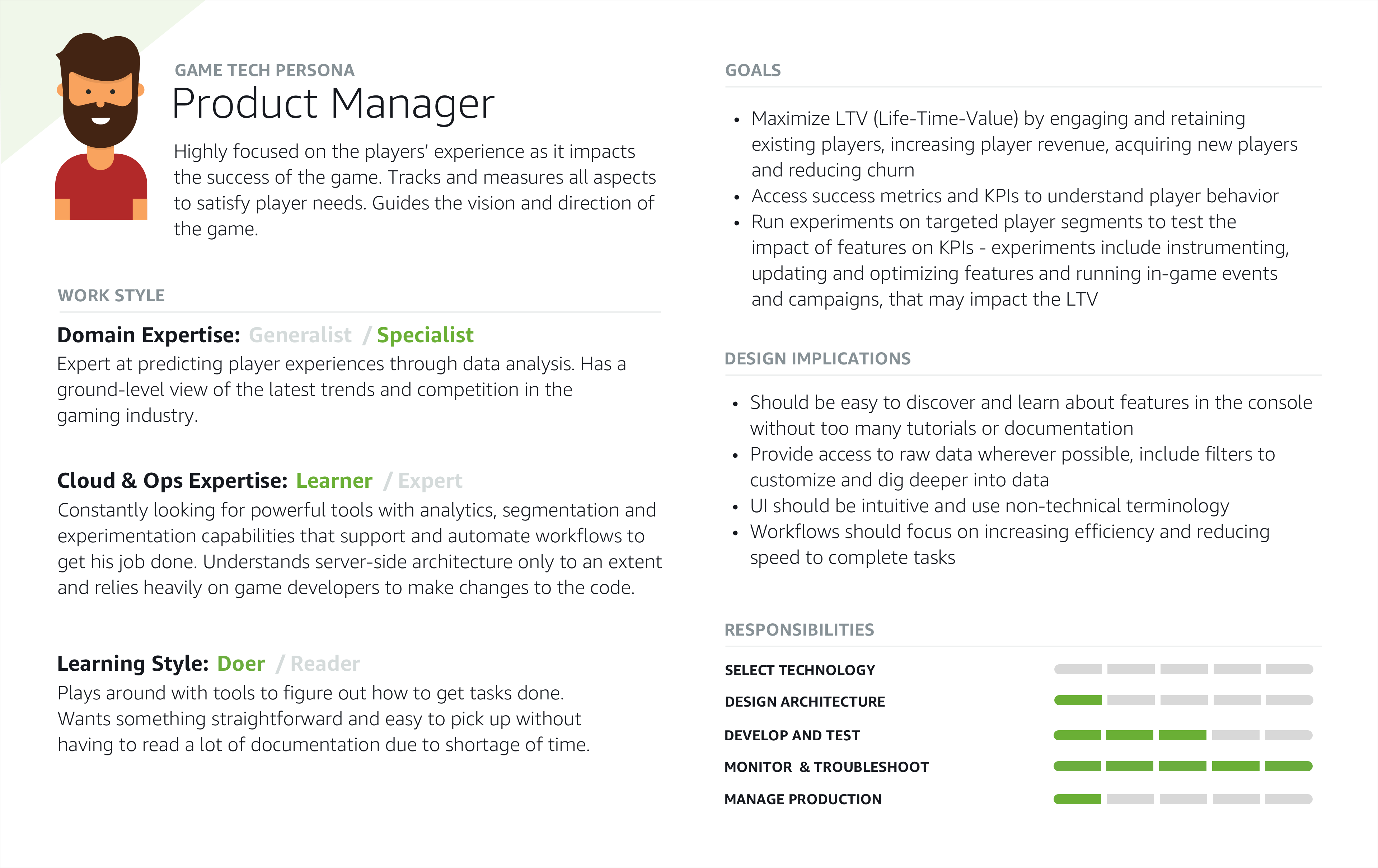

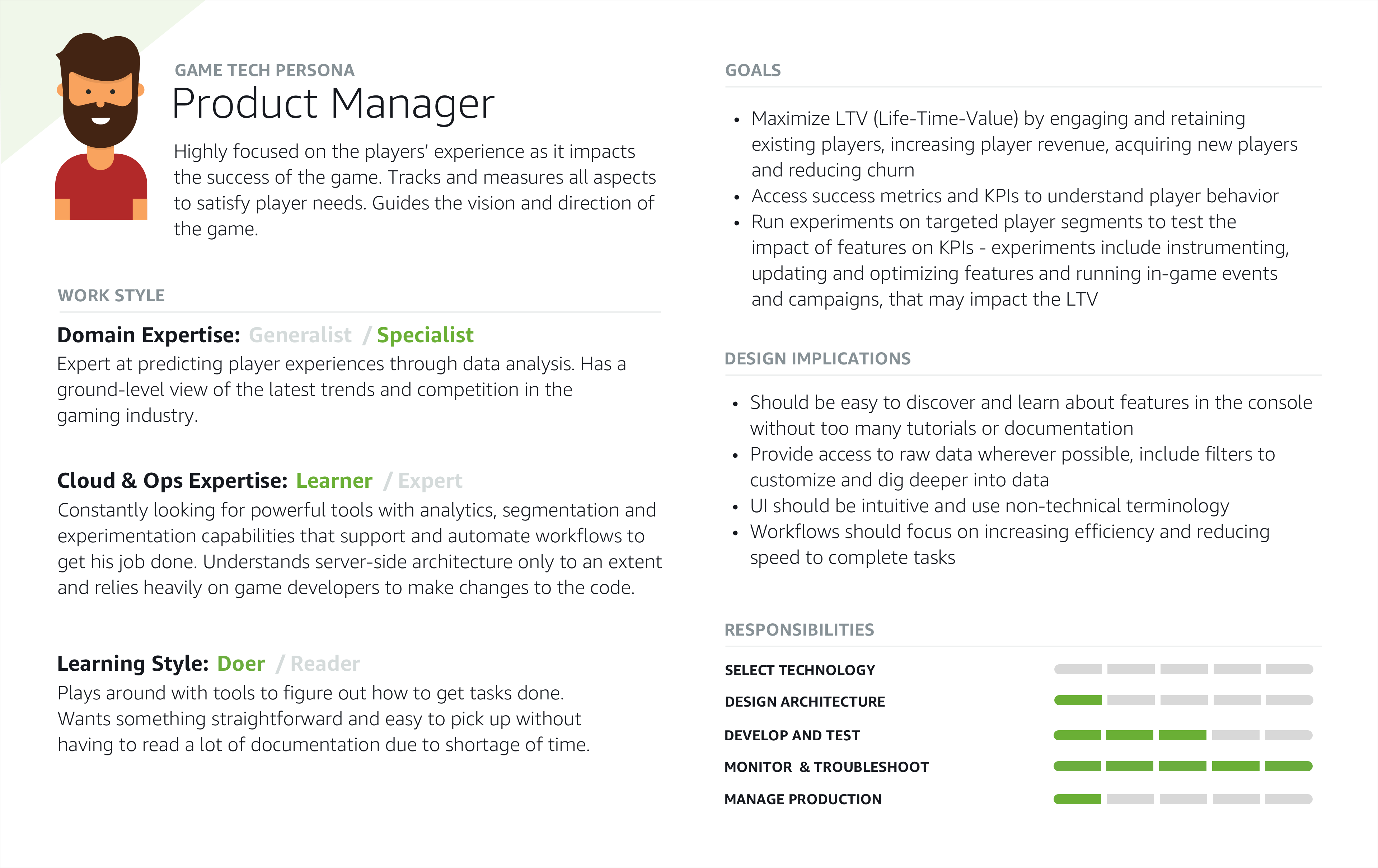

Product Manager

Primary Persona

Runs experiments on targeted player segments to assess how new features impact key game KPIs.

Reimagining A/B testing to optimize player engagement, retention, and monetization

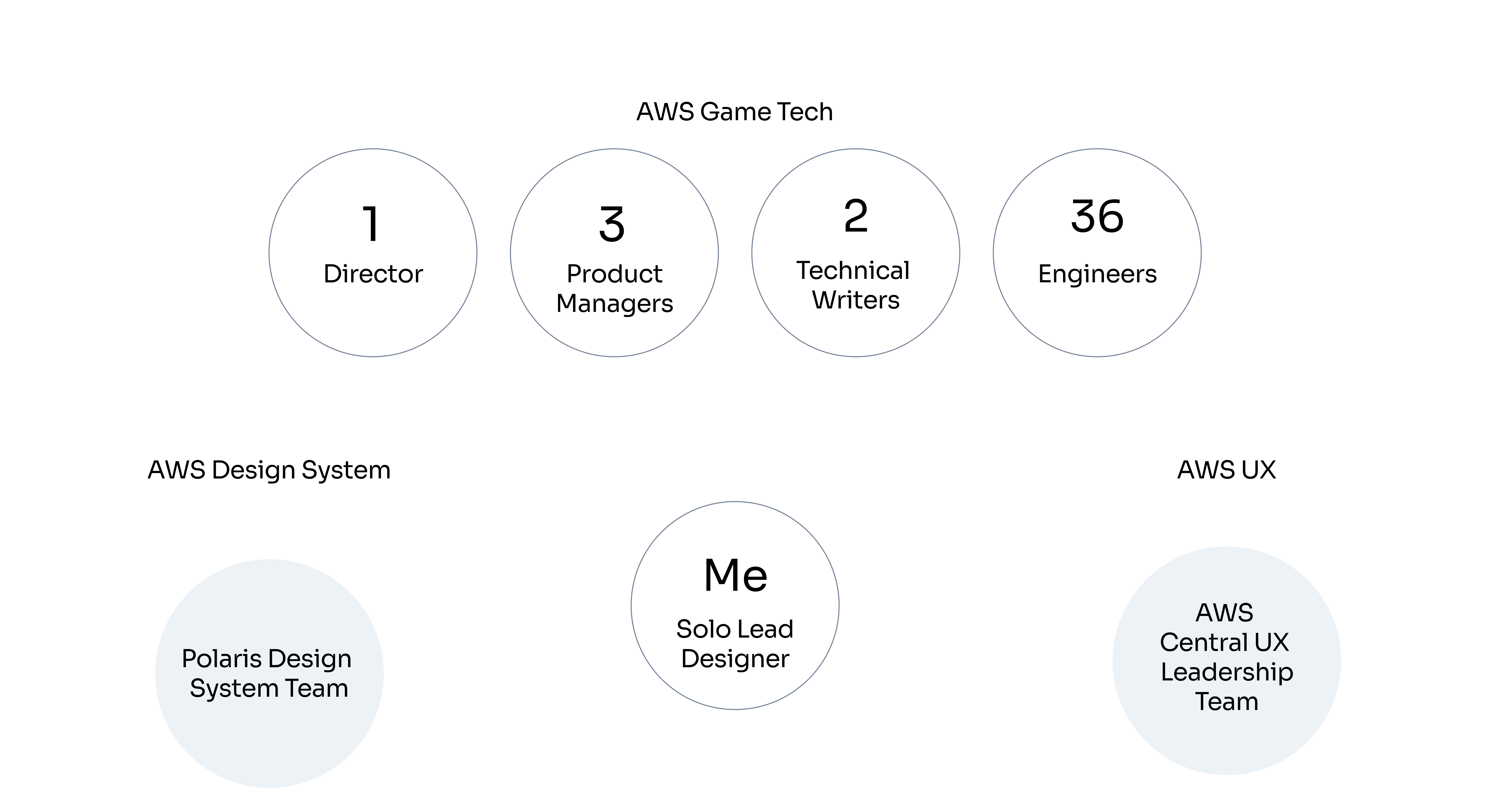

I reviewed and presented designs to the Director at AWS Game Tech among other key stakeholders.

Game studios require ongoing optimization to improve engagement and monetization

Hard-coding game configurations for each player cohort is inconsistent and error prone

Capturing meaningful performance data and identifying optimal configurations is tedious

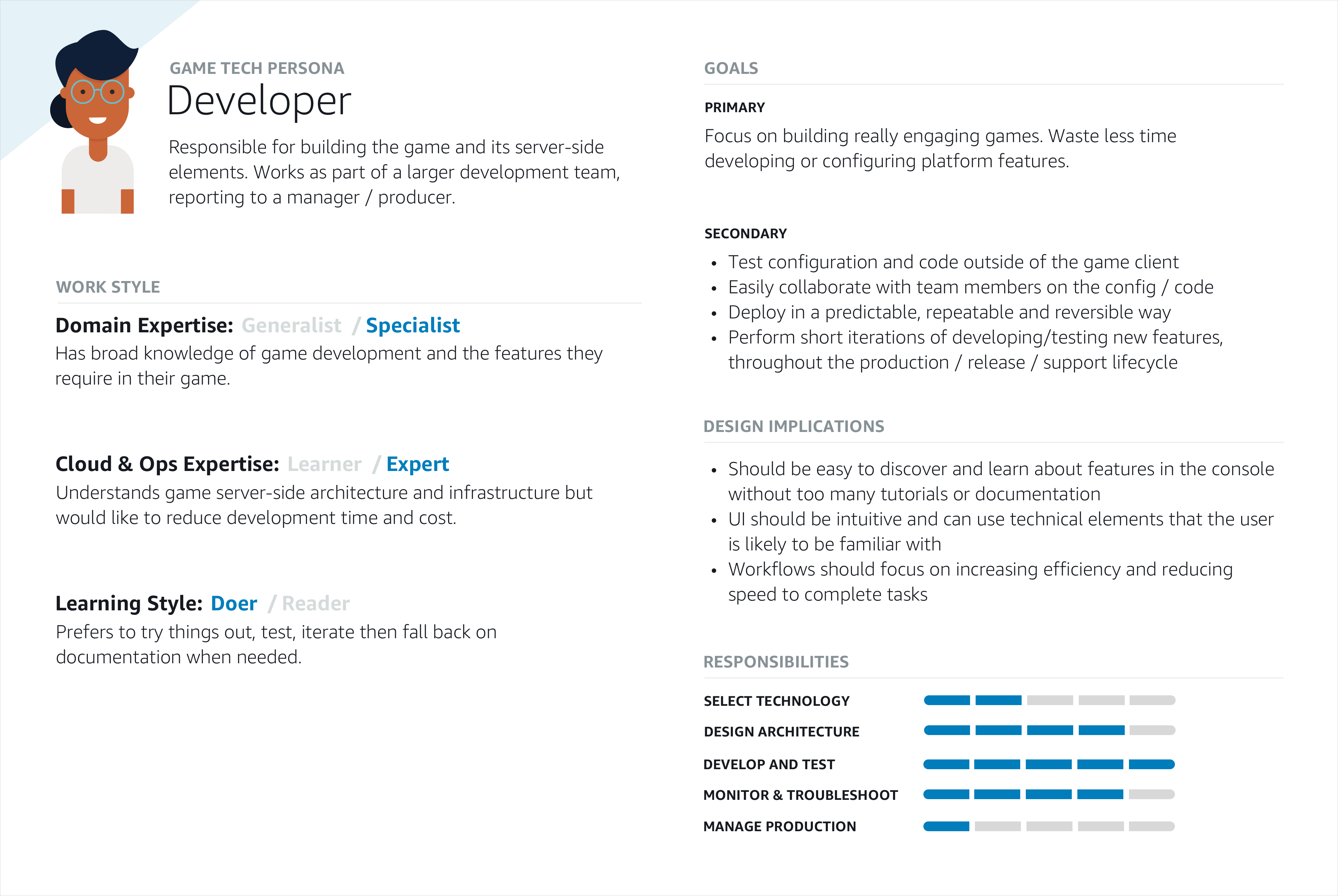

Primary Persona

Runs experiments on targeted player segments to assess how new features impact key game KPIs.

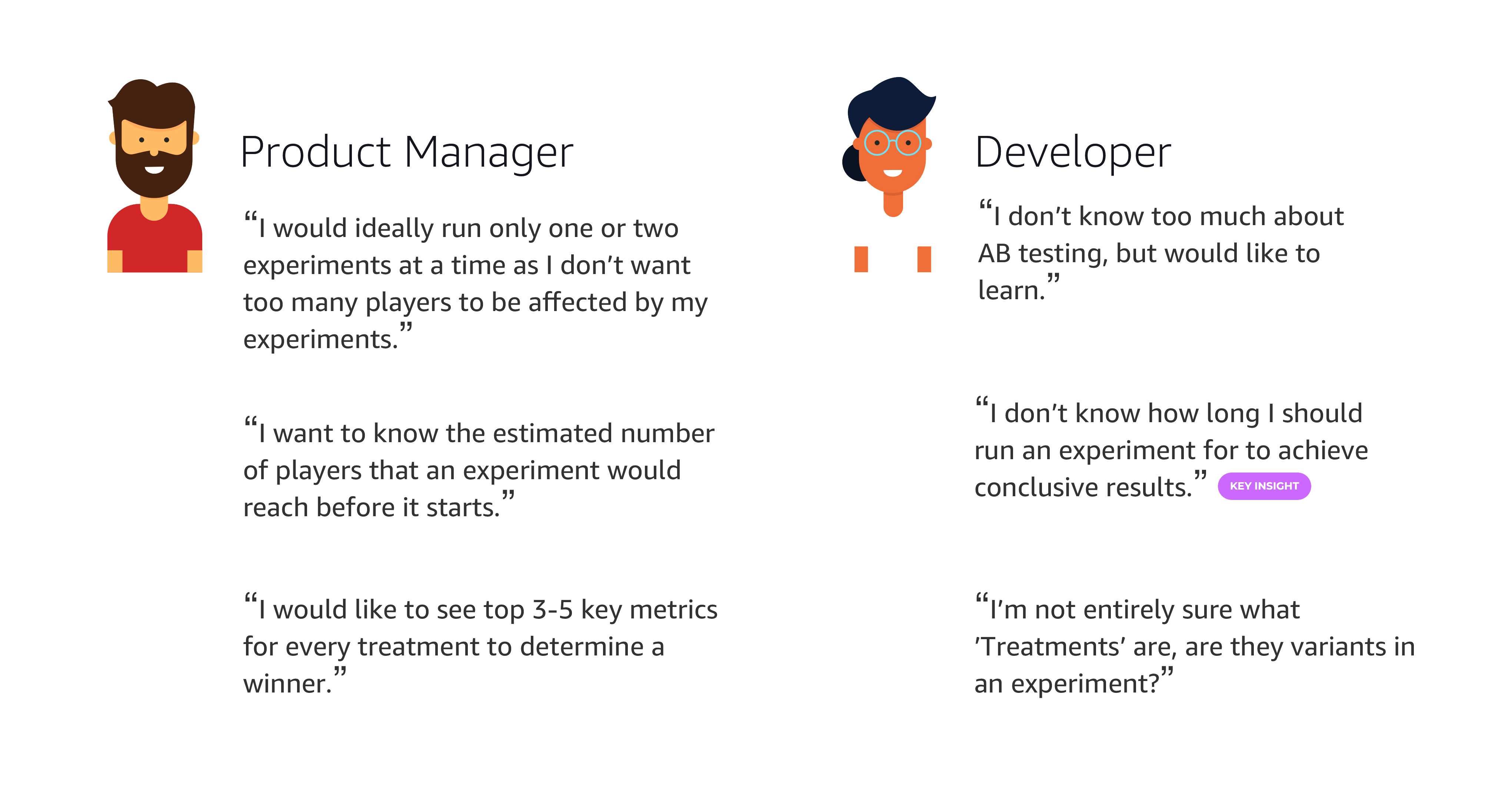

Secondary Persona

Mainly focuses on coding and development but also runs experiments when a PM is unavailable.

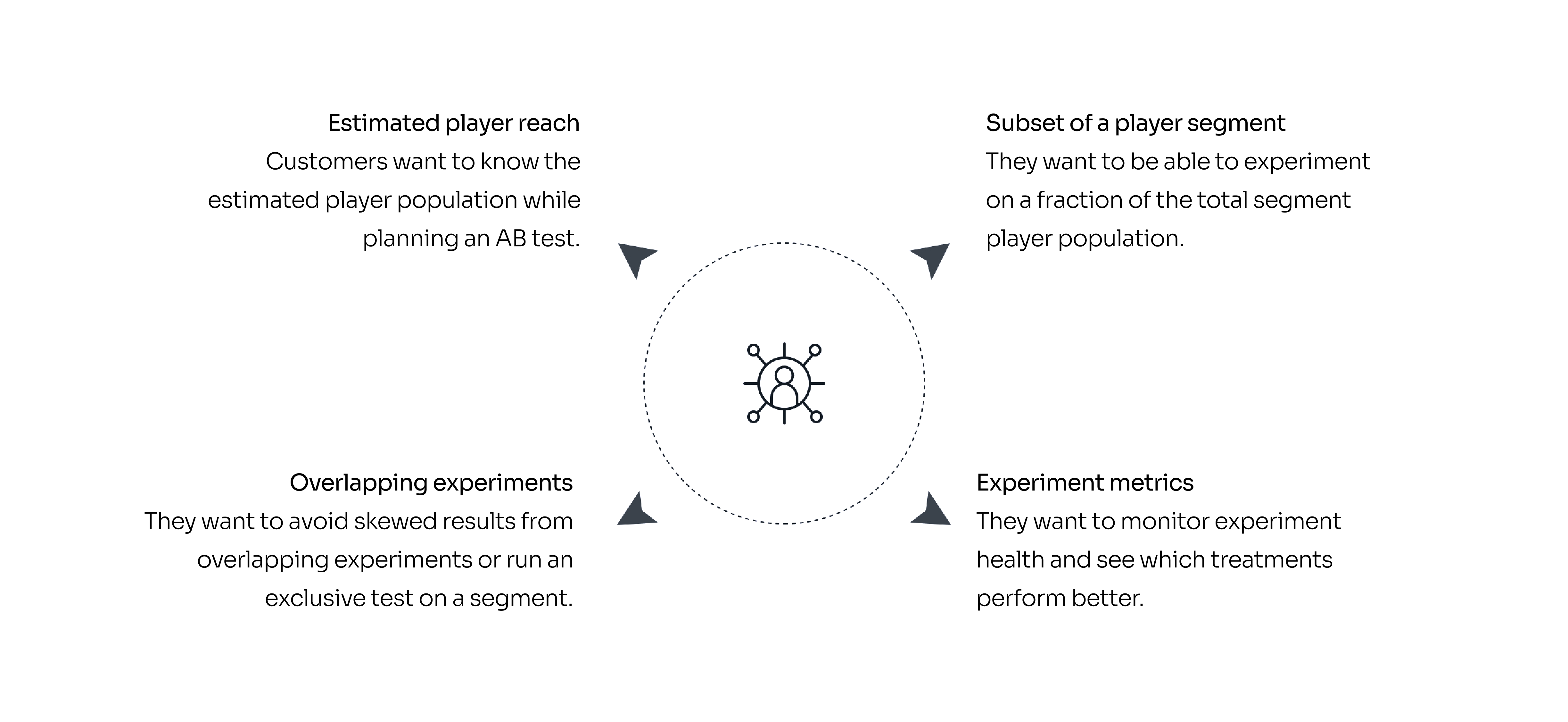

I facilitated 4 1:1 customer workshops to research and document user needs and behaviors - what makes them start an experiment and what are their goals, how do they monitor an experiment while it's running, and how do they analyze the results after an experiment is completed.

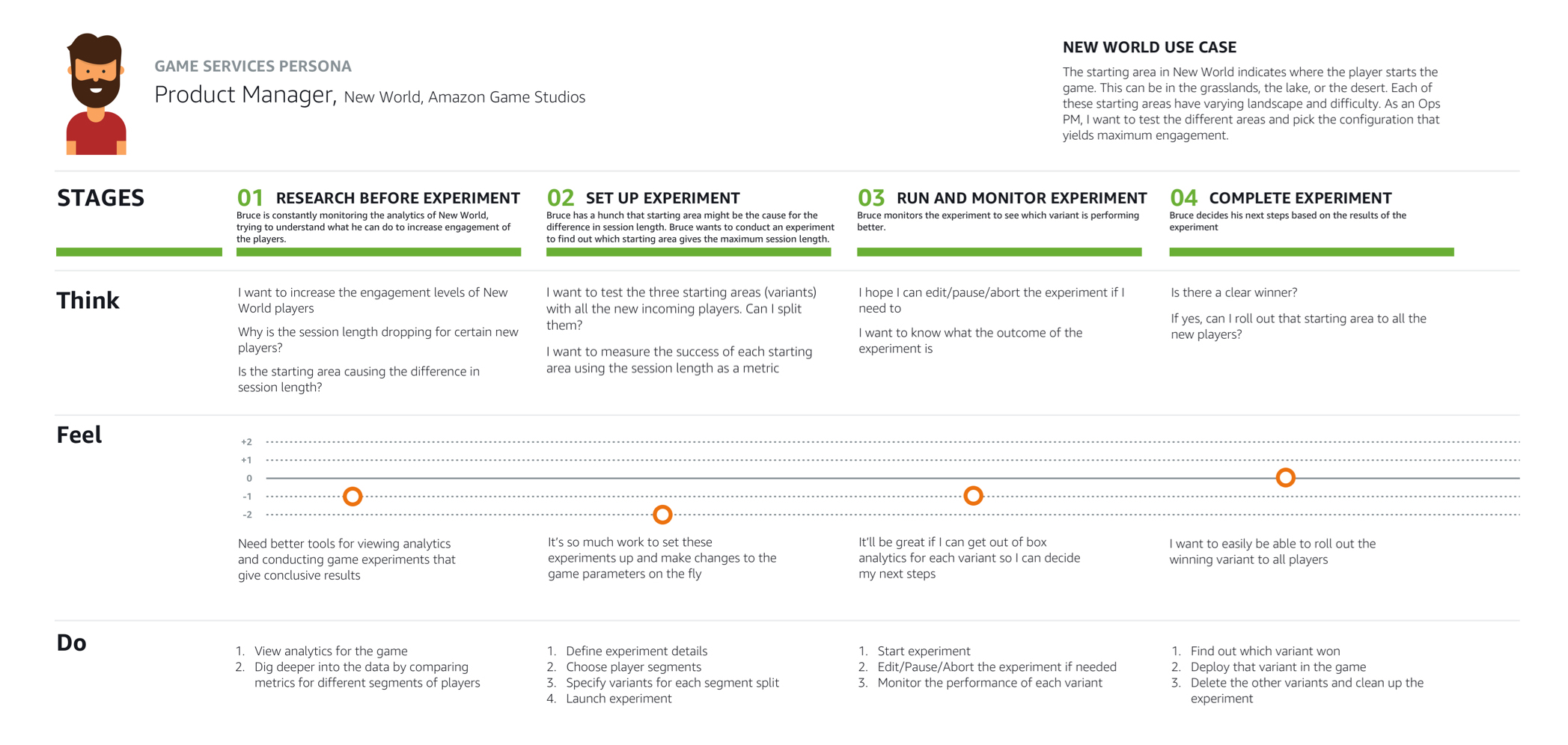

I built a customer journey map to clarify player needs and communicate the service's value during the Amazon CXBR review. It was grounded in a New World use case where a PM tested which start area produced the longest session length.

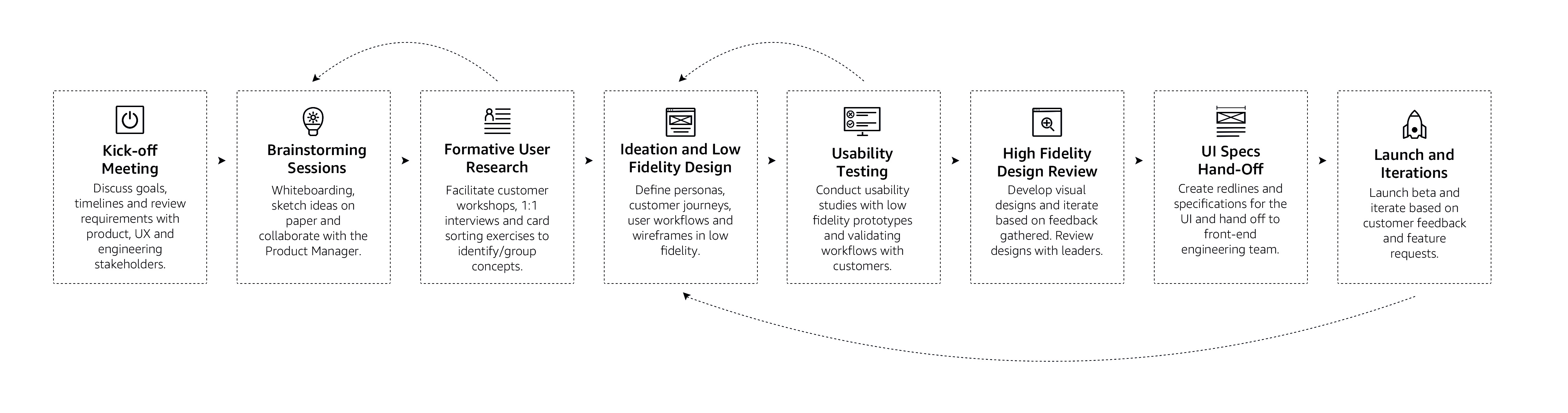

I generally begin my process with understanding the business requirements, identifying the customer needs and then iterating on the designs while finding ways to validate them with customers throughout the process.

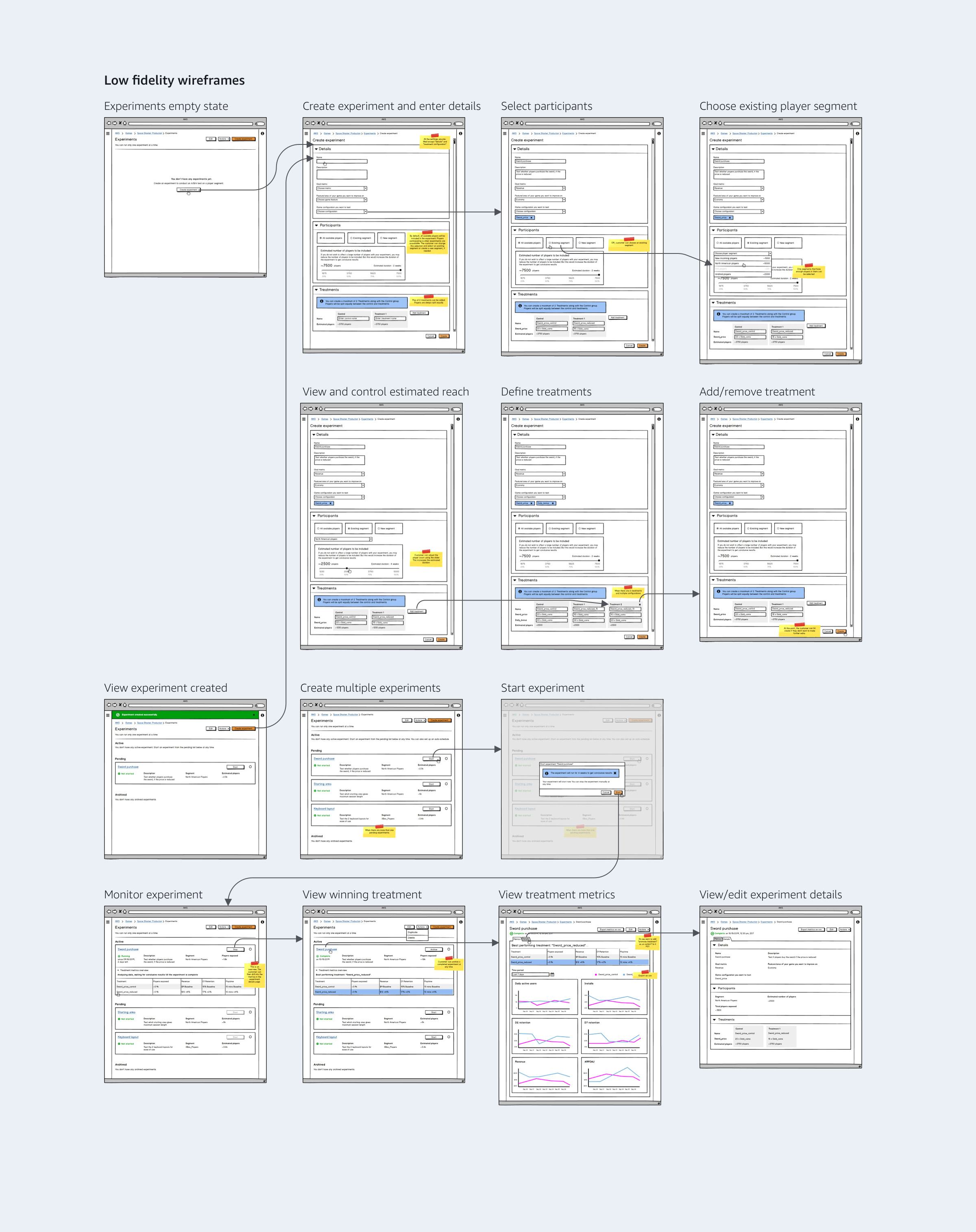

The qualitative data gathered during research informed the workflow. I also developed concepts and low fidelity wireframes using Balsamiq, and reviewed them with the Director and Product Managers.

We validated the wireframes with 6 customers (3 Product Managers and 3 Game Developers) through a usability study that I organized and facilitated. I wrote the study plan for a task-based analysis to assess the effectiveness of the workflows.

I collaborated with the AWS design system team to understand existing patterns and identify gaps. I also worked with the PMs to prioritize features, starting with single experiments and planning multiple experiments based on user feedback.

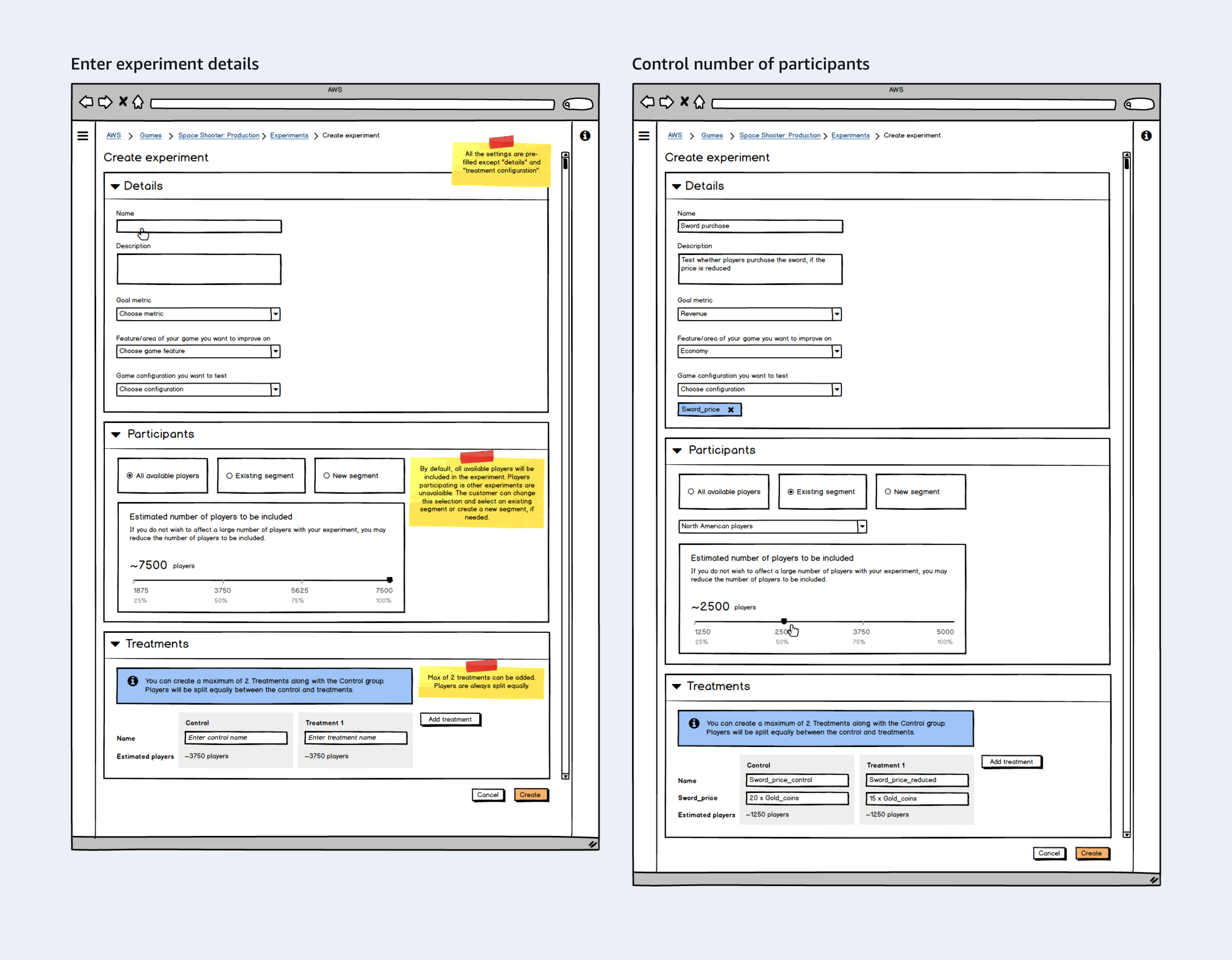

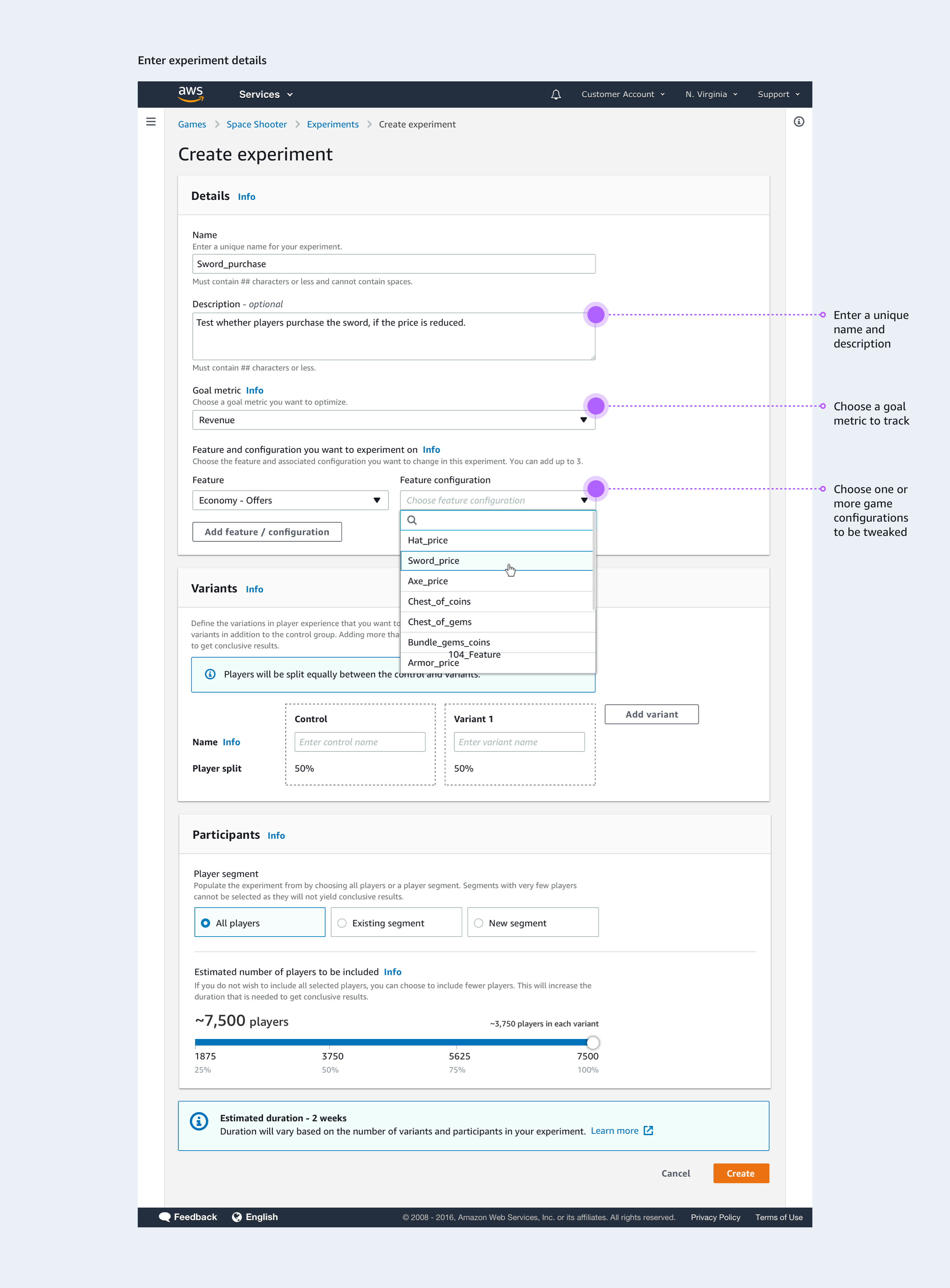

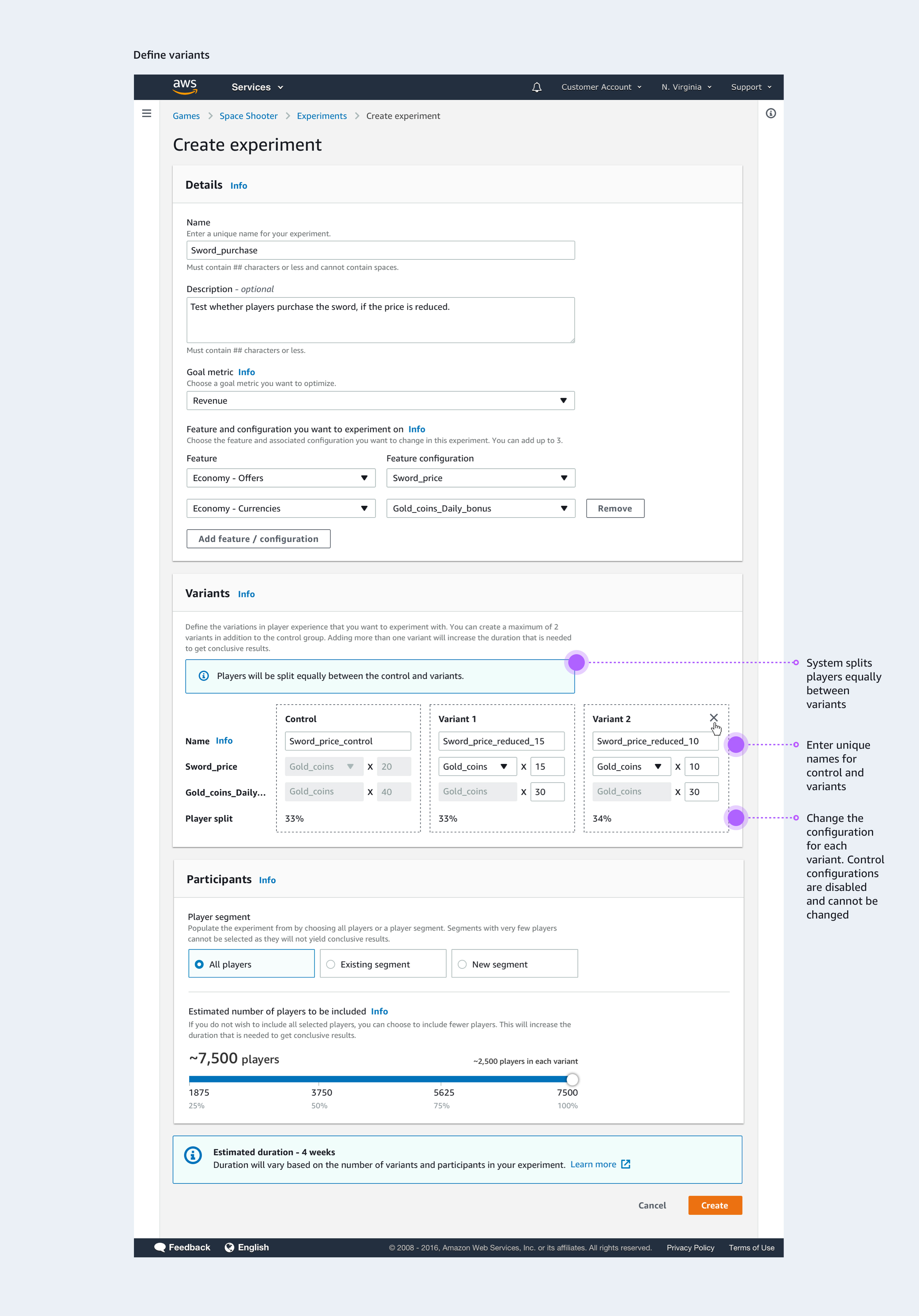

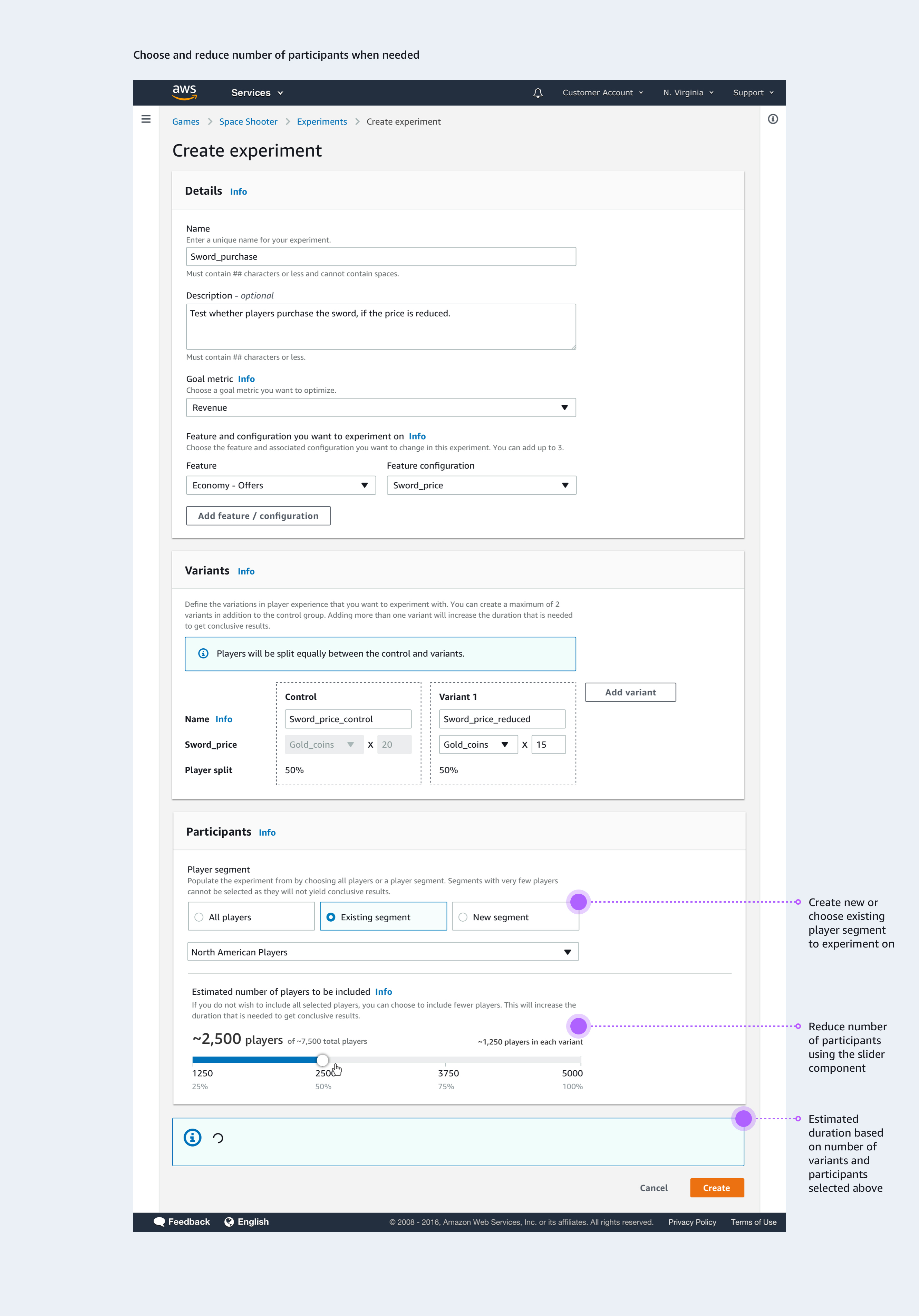

Customers can set up an experiment and configure the parameters they want to test.

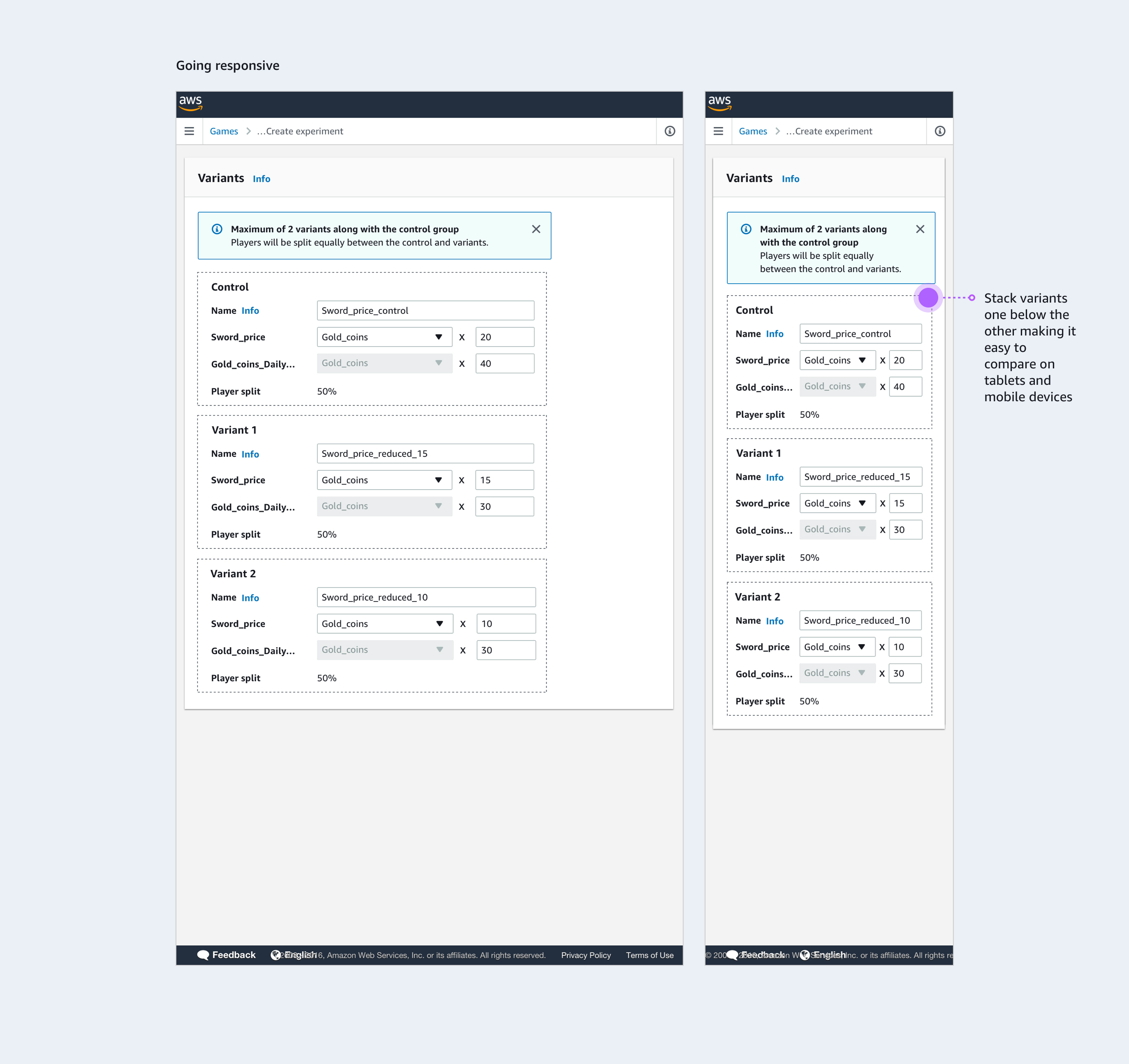

They specify different variants for player segments. I explored layouts that make comparisons intuitive, responsive, and clear across all screen sizes.

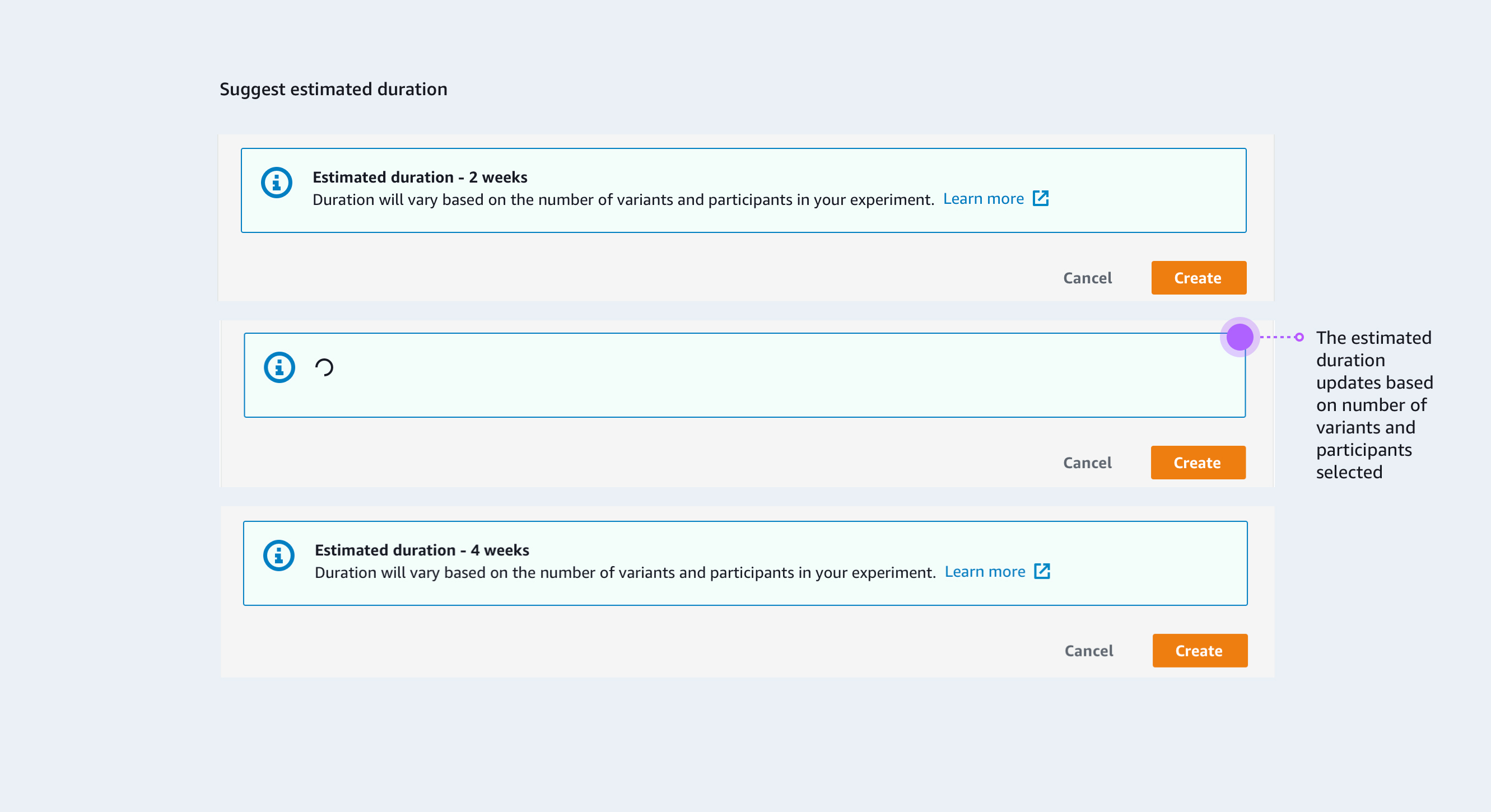

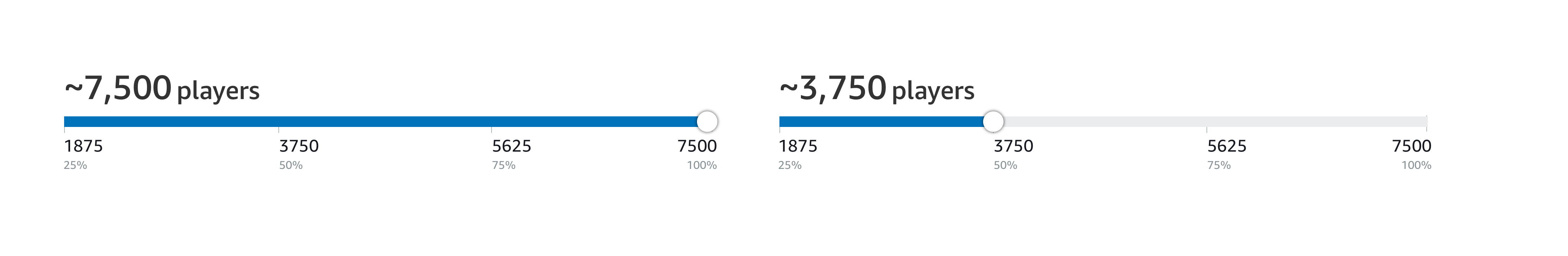

Customers select player segments for the experiment. Based on usability feedback, we added guidance on experiment duration, factoring in the number of variants and participants.

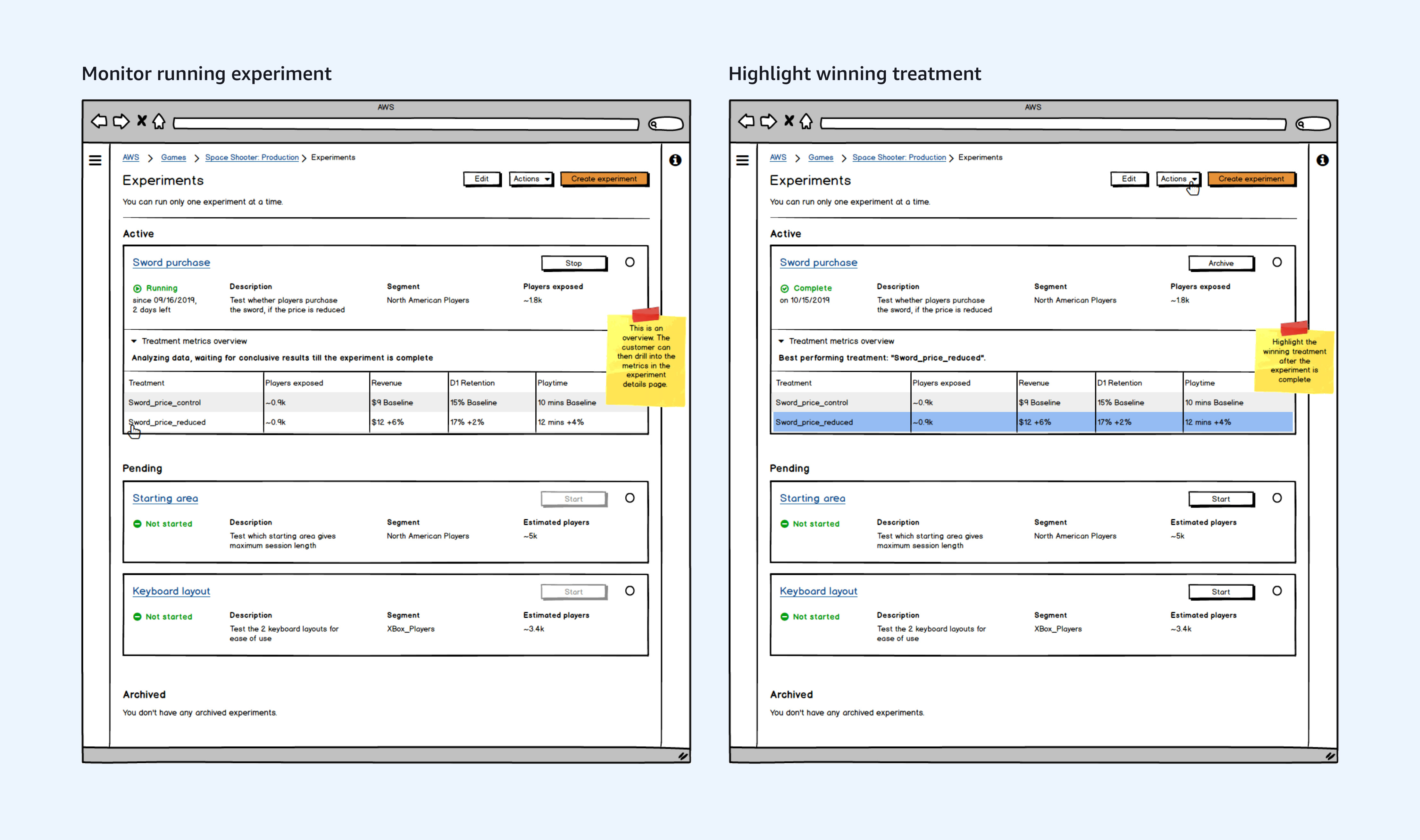

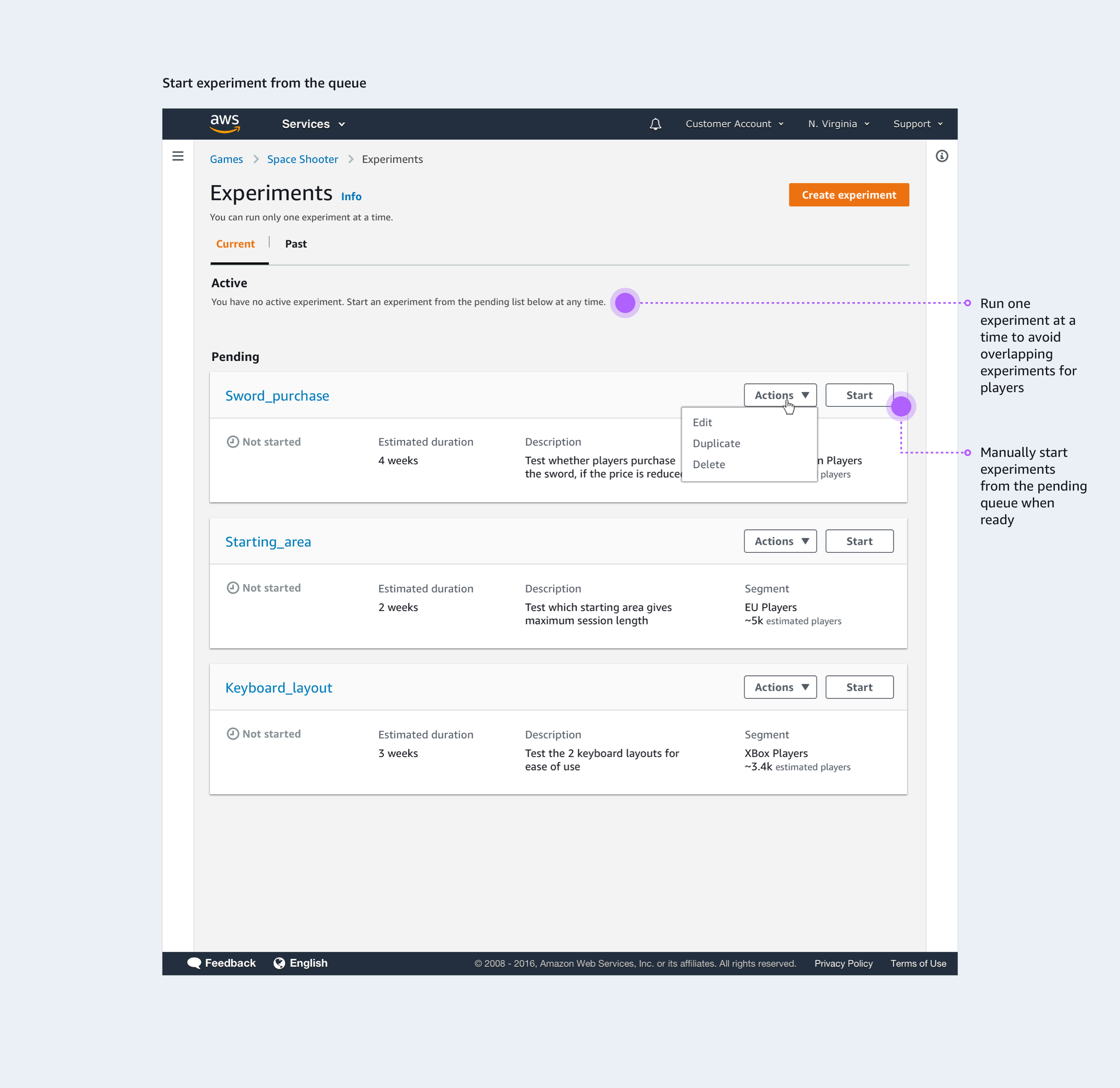

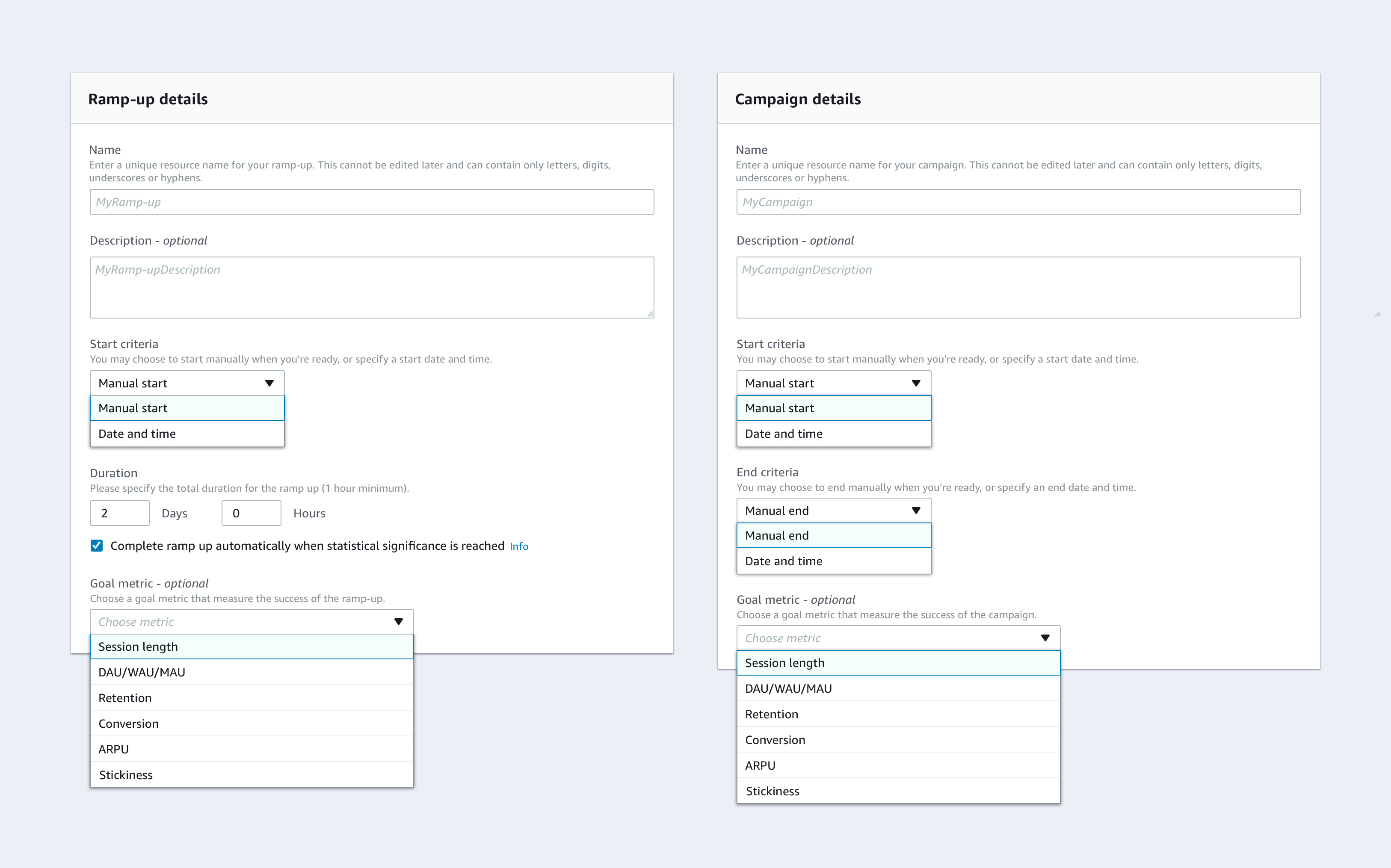

Experiment creation is separate from starting/stopping. For the MLP, customers can manually start/stop experiments, with future plans for pre-scheduled runs.

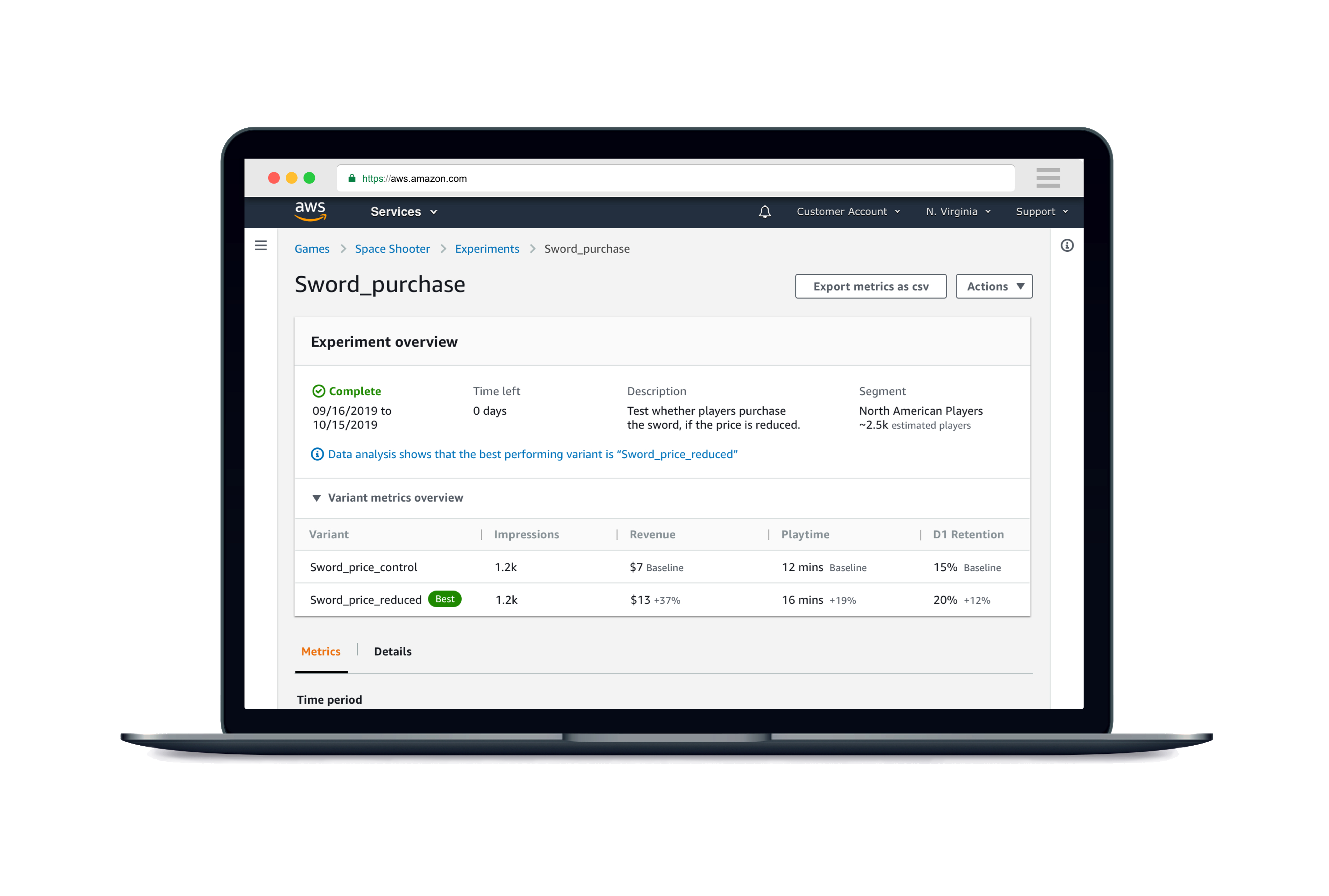

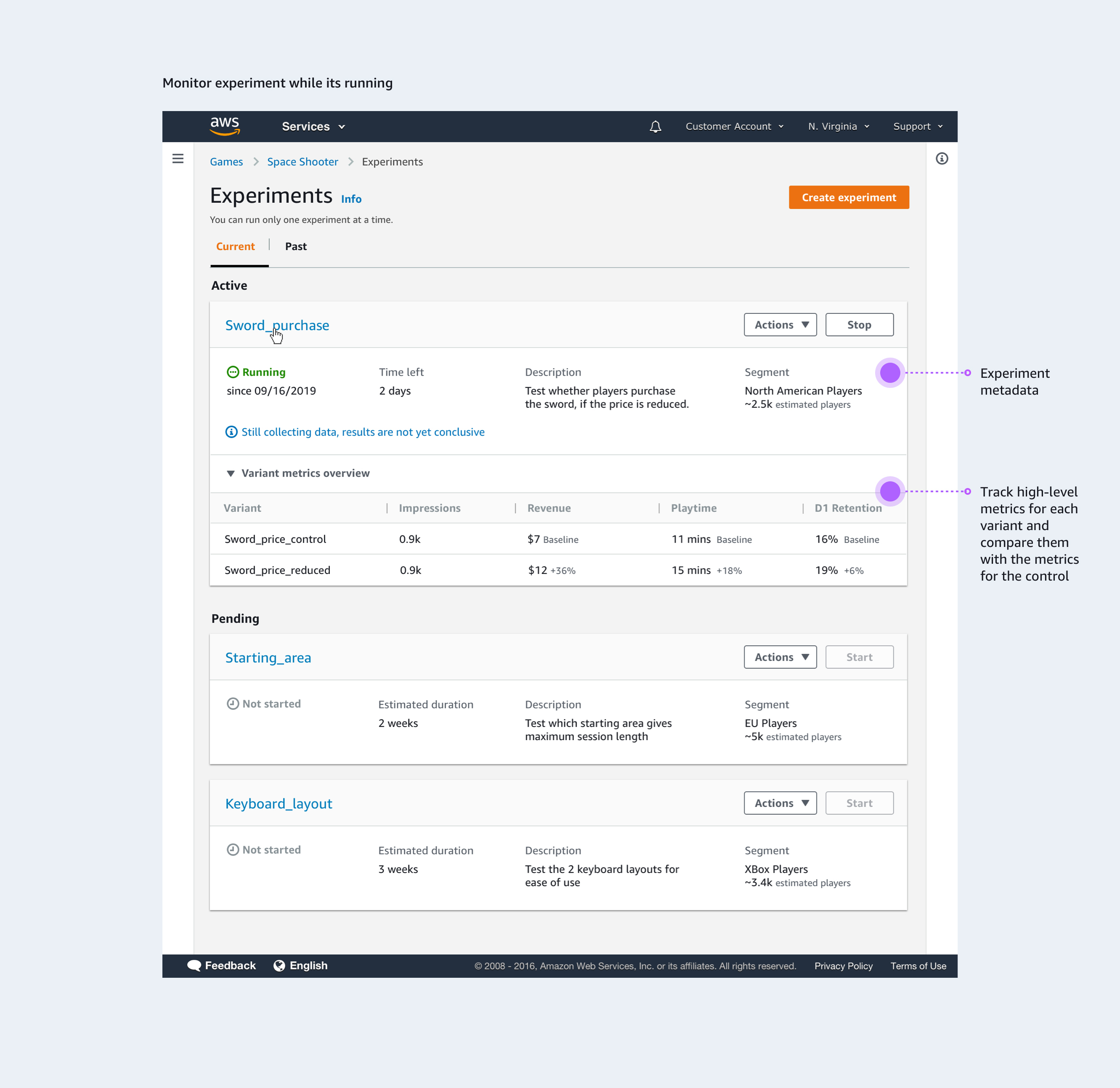

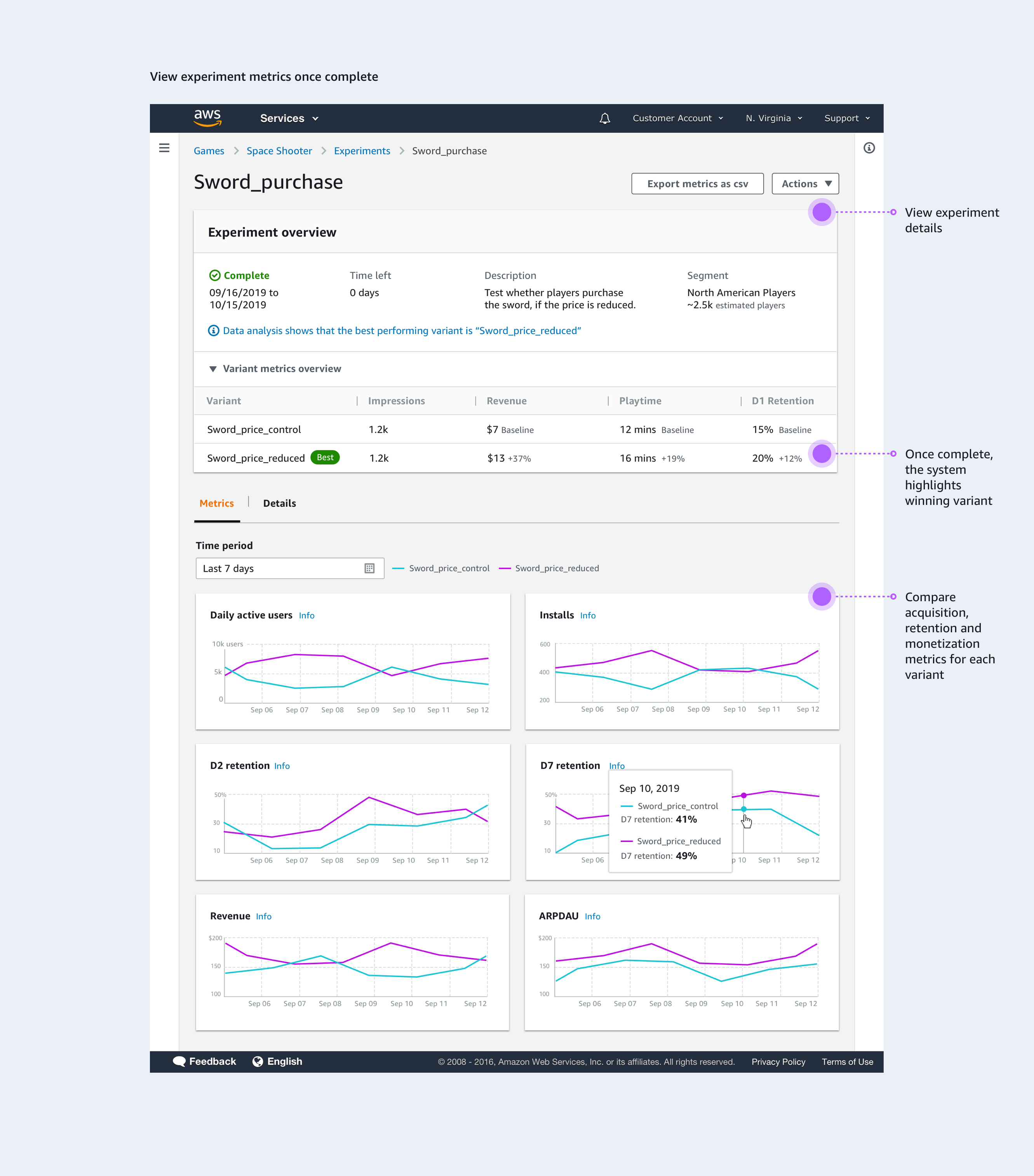

Customers track high-level metrics for running experiments. Once complete, the system identifies the winning variant. Key KPIs—acquisition, retention, and monetization—are highlighted to enable holistic assessment.

The existing design system did not have a counter visualization pattern to highlight an important number. I designed a couple of pattern variations and worked with the design system team to develop specifications and document scenarios for usage. The pattern was borrowed by designers working for other services like AWS EC2 and Pinpoint.

To strengthen collaboration among designers who owned different products at AWS, I set up a weekly critique process with a sign-up sheet, defined roles, clear guidelines, and note-taking templates. This enabled 15 critique sessions in four months and boosted participation and cross-team collaboration.

The teams at Amazon Game Studios adopted the service and used it for conducting AB tests on games like New World, Crucible and The Grand Tour Game.

Based on the success of the experimentation feature, internal teams requested the following features that I iterated on: a feature to conduct promotional campaigns and a feature to ramp-up new player experiences.

This project taught me AWS core fundamentals and how to work through technical complexity. I dove into the tech, learned how the APIs and SDKs worked, and ensured parity between the UI and CLI. With a new, ambiguous service and as the sole designer, I learned to break big problems into manageable pieces, design simple yet scalable solutions, and communicate effectively under tight deadlines.

I'm always open to discussing new opportunities, collaborations, or just chatting about design. Feel free to reach out!

divs.hariharan@gmail.com

Copyright © Divya Hariharan 2025